TOM SHANNON

ABOUT

I'm Tom — a mutli-disciplinary technologist and artist. I currently work as a software developer and consultant at ThoughtWorks, based in Chicago. I specialize in machine learning, cloud computing, mobile development, and technology driven art installations.

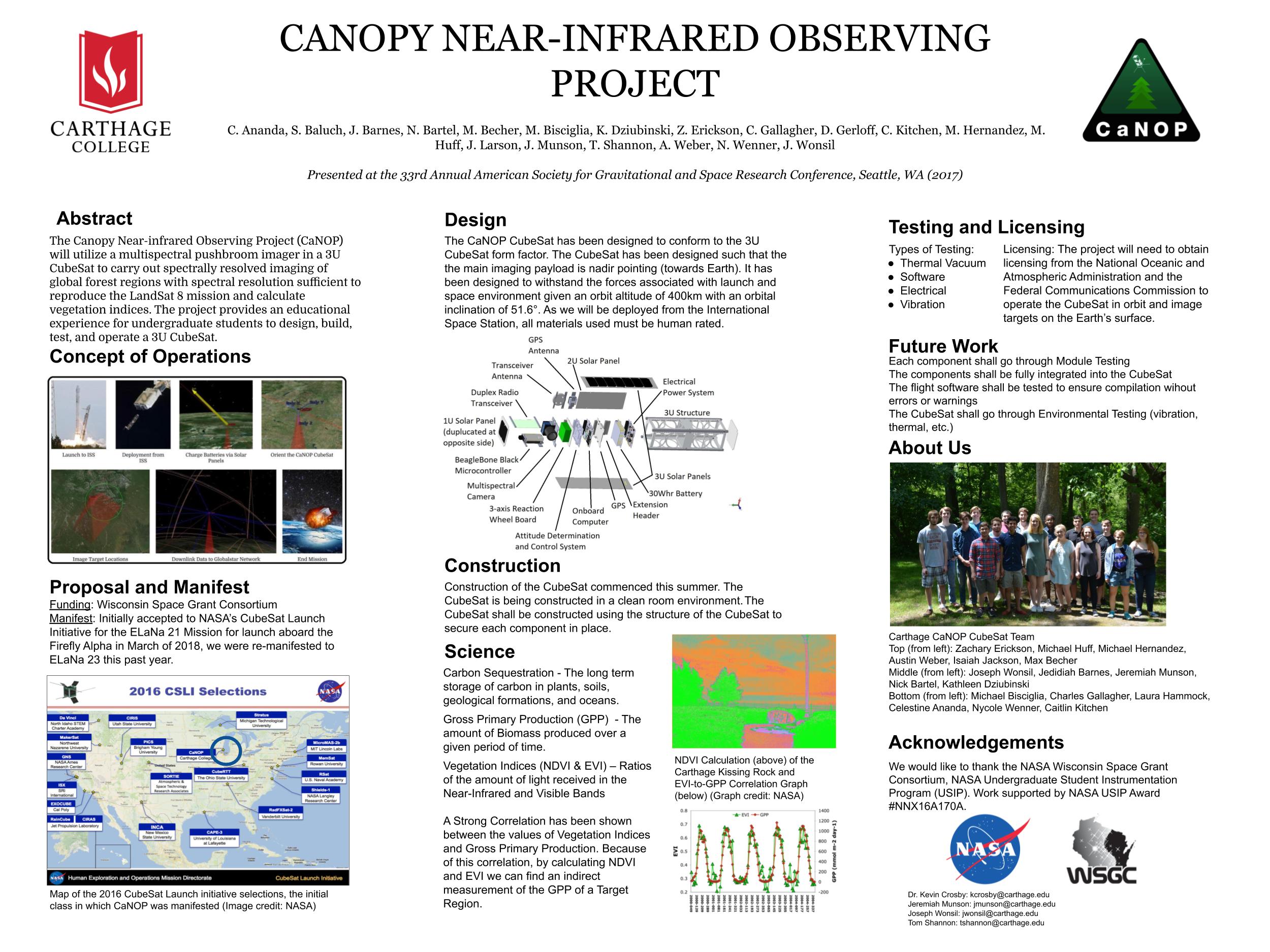

I attended Carthage College for my undergraduate degree and received my B.A. in physics and computer science. During my studies, I helped lead several NASA funded research projects, involving building suborbital rocketry payloads, high powered rockets, and a small earth observing satellite.

Since then, I have collaborated with artists to create unique technology driven art installations that push boundaries of what art can do. Some of this software includes emotion driven film, eye gaze tracking on mobile devices, and re-creating lost ancestry records using generative adversial networks. Some of these pieces have gone on to exhibit at places such as the Cooper Hewitt Smithsonian Museum, ARS Electronica, BOZAR, and Science Gallery Dublin. For future projects, I am interested in continuing to create generative artwork that addresses social, economic, and cultural issues in our society.